Perovskites power up the solar industry

Tsutomu Miyasaka was on a mission to build a better solar cell. It was the early 2000s, and the Japanese scientist wanted to replace the delicate molecules that he was using to capture sunlight with a sturdier, more effective option.

So when a student told him about an unfamiliar material with unusual properties, Miyasaka had to try it. The material was “very strange,” he says, but he was always keen on testing anything that might respond to light.

Other scientists were running electricity through the material, called a perovskite, to generate light. Miyasaka, at Toin University of Yokohama in Japan, wanted to know if the material could also do the opposite: soak up sunlight and convert it into electricity. To his surprise, the idea worked. When he and his team replaced the light-sensitive components of a solar cell with a very thin layer of the perovskite, the illuminated cell pumped out a little bit of electric current.

The result, reported in 2009 in the Journal of the American Chemical Society, piqued the interest of other scientists, too. The perovskite’s properties made it (and others in the perovskite family) well-suited to efficiently generate energy from sunlight. Perhaps, some scientists thought, this perovskite might someday be able to outperform silicon, the light-absorbing material used in more than 90 percent of solar cells around the world.

Initial excitement quickly translated into promising early results. An important metric for any solar cell is how efficient it is — that is, how much of the sunlight that strikes its surface actually gets converted to electricity. By that standard, perovskite solar cells have shone, increasing in efficiency faster than any previous solar cell material in history. The meager 3.8 percent efficiency reported by Miyasaka’s team in 2009 is up to 22 percent this year. Today, the material is almost on par with silicon, which scientists have been tinkering with for more than 60 years to bring to a similar efficiency level.

“People are very excited because [perovskite’s] efficiency number has climbed so fast. It really feels like this is the thing to be working on right now,” says Jao van de Lagemaat, a chemist at the National Renewable Energy Laboratory in Golden, Colo.

Now, perovskite solar cells are at something of a crossroads. Lab studies have proved their potential: They are cheaper and easier to fabricate than time-tested silicon solar cells. Though perovskites are unlikely to completely replace silicon, the newer materials could piggyback onto existing silicon cells to create extra-effective cells. Perovskites could also harness solar energy in new applications where traditional silicon cells fall flat — as light-absorbing coatings on windows, for instance, or as solar panels that work on cloudy days or even absorb ambient sunlight indoors.

Whether perovskites can make that leap, though, depends on current research efforts to fix some drawbacks. Their tendency to degrade under heat and humidity, for example, is not a great characteristic for a product meant to spend hours in the sun. So scientists are trying to boost stability without killing efficiency.

“There are challenges, but I think we’re well on our way to getting this stuff stable enough,” says Henry Snaith, a physicist at the University of Oxford. Finding a niche for perovskites in an industry so dominated by silicon, however, requires thinking about solar energy in creative ways.

Leaping electrons

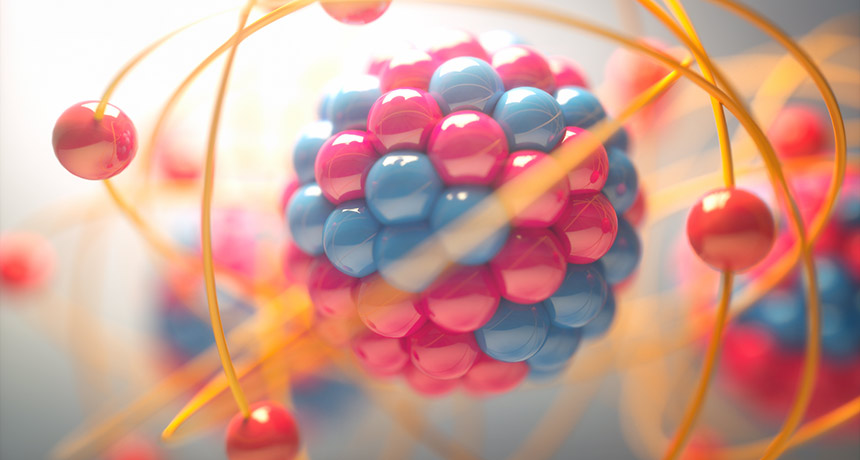

Perovskites flew under the radar for years before becoming solar stars. The first known perovskite was a mineral, calcium titanate, or CaTiO3, discovered in the 19th century. In more recent years, perovskites have expanded to a class of compounds with a similar structure and chemical recipe — a 1:1:3 ingredient ratio — that can be tweaked with different elements to make different “flavors.”

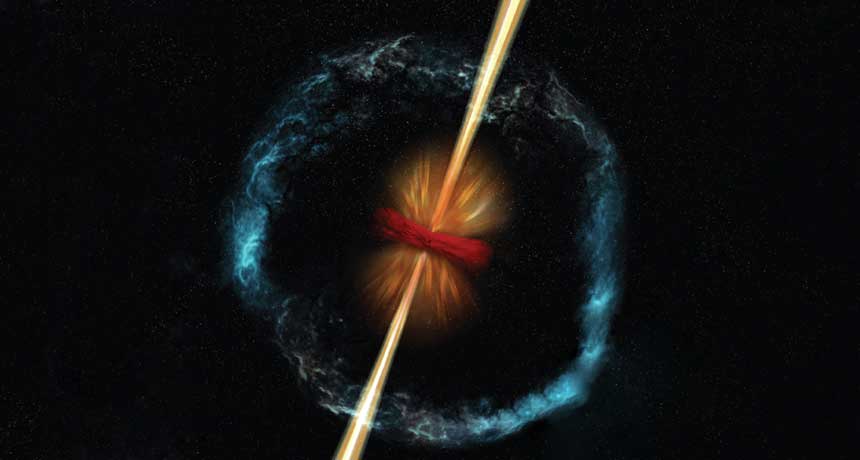

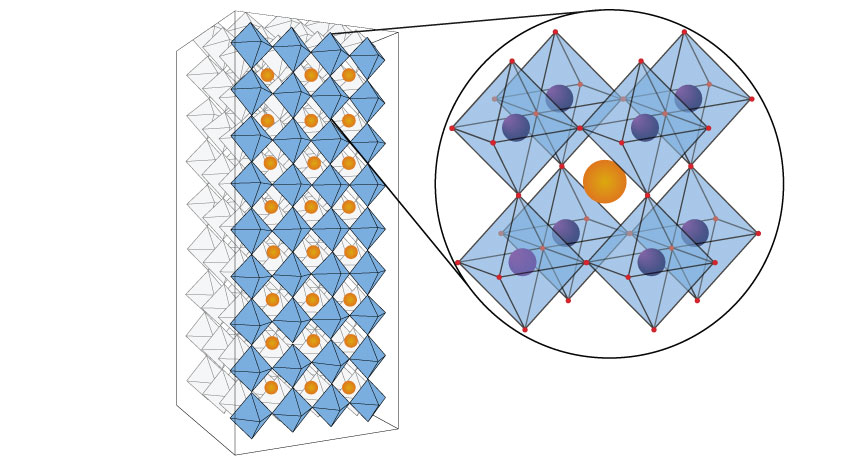

But the perovskites being studied for the light-absorbing layer of solar cells are mostly lab creations. Many are lead halide perovskites, which combine a lead ion and three ions of iodine or a related element, such as bromine, with a third type of ion (usually something like methylammonium). Those ingredients link together to form perovskites’ hallmark cagelike pyramid-on-pyramid structure. Swapping out different ingredients (replacing lead with tin, for instance) can yield many kinds of perovskites, all with slightly different chemical properties but the same basic crystal structure.

Perovskites owe their solar skills to the way their electrons interact with light. When sunlight shines on a solar panel, photons — tiny packets of light energy — bombard the panel’s surface like a barrage of bullets and get absorbed. When a photon is absorbed into the solar cell, it can share some of its energy with a negatively charged electron. Electrons are attracted to the positively charged nucleus of an atom. But a photon can give an electron enough energy to escape that pull, much like a video game character getting a power-up to jump a motorbike across a ravine. As the energized electron leaps away, it leaves behind a positively charged hole. A separate layer of the solar cell collects the electrons, ferrying them off as electric current.

The amount of energy needed to kick an electron over the ravine is different for every material. And not all photon power-ups are created equal. Sunlight contains low-energy photons (infrared light) and high-energy photons (sunburn-causing ultraviolet radiation), as well as all of the visible light in between.

Photons with too little energy “will just sail right on through” the light-catching layer and never get absorbed, says Daniel Friedman, a photovoltaic researcher at the National Renewable Energy Lab. Only a photon that comes in with energy higher than the amount needed to power up an electron will get absorbed. But any excess energy a photon carries beyond what’s needed to boost up an electron gets lost as heat. The more heat lost, the more inefficient the cell.

Because the photons in sunlight vary so much in energy, no solar cell will ever be able to capture and optimally use every photon that comes its way. So you pick a material, like silicon, that’s a good compromise — one that catches a decent number of photons but doesn’t waste too much energy as heat, Friedman says.

Although it has dominated the solar cell industry, silicon can’t fully use the energy from higher-energy photons; the material’s solar conversion efficiency tops out at around 30 percent in theory and has hit 20-some percent in practice. Perovskites could do better. The electrons inside perovskite crystals require a bit more energy to dislodge. So when higher-energy photons come into the solar cell, they devote more of their energy to dislodging electrons and generating electric current, and waste less as heat. Plus, by changing the ingredients and their ratios in a perovskite, scientists can adjust the photons it catches. Using different types of perovskites across multiple layers could allow solar cells to more effectively absorb a broader range of photons.

Perovskites have a second efficiency perk. When a photon excites an electron inside a material and leaves behind a positively charged hole, there’s a tendency for the electron to slide right back into a hole. This recombination, as it’s known, is inefficient — an electron that could have fed an electric current instead just stays put.

In perovskites, though, excited electrons usually migrate quite far from their holes, Snaith and others have found by testing many varieties of the material. That boosts the chances the electrons will make it out of the perovskite layer without landing back in a hole.

“It’s a very rare property,” Miyasaka says. It makes for an efficient sunlight absorber.

Some properties of perovskites also make them easier than silicon to turn into solar cells. Making a conventional silicon solar cell requires many steps, all done in just the right order at just the right temperature — something like baking a fragile soufflé. The crystals of silicon have to be perfect, because even small defects in the material can hurt its efficiency. The need for such precision makes silicon solar cells more expensive to produce.

Perovskites are more like brownies from a box — simpler, less finicky. “You can make it in an office, basically,” says materials scientist Robert Chang of Northwestern University in Evanston, Ill. He’s exaggerating, but only a little. Perovskites are made by essentially mixing a bunch of ingredients together and depositing them on a surface in a thin, even film. And while making crystalline silicon requires temperatures up to 2000° Celsius, perovskite crystals form at easier-to-reach temperatures — lower than 200°.

Seeking stability

In many ways, perovskites have become even more promising solar cell materials over time, as scientists have uncovered exciting new properties and finessed the materials’ use. But no material is perfect. So now, scientists are searching for ways to overcome perovskites’ real-world limitations. The most pressing issue is their instability, van de Lagemaat says. The high efficiency levels reported from labs often last only days or hours before the materials break down.

Tackling stability is a less flashy problem than chasing efficiency records, van de Lagemaat points out, which is perhaps why it’s only now getting attention. Stability isn’t a single number that you can flaunt, like an efficiency value. It’s also a bit harder to define, especially since how long a solar cell lasts depends on environmental conditions like humidity and precipitation levels, which vary by location.

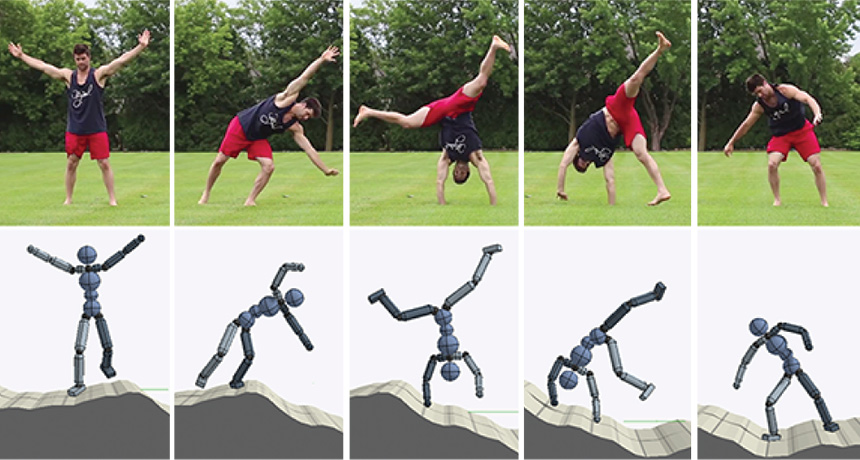

Encapsulating the cell with water-resistant coatings is one strategy, but some scientists want to bake stability into the material itself. To do that, they’re experimenting with different perovskite designs. For instance, solar cells containing stacks of flat, graphenelike sheets of perovskites seem to hold up better than solar cells with the standard three-dimensional crystal and its interwoven layers.

In these 2-D perovskites, some of the methylammonium ions are replaced by something larger, like butylammonium. Swapping in the bigger ion forces the crystal to form in sheets just nanometers thick, which stack on top of each other like pages in a book, says chemist Aditya Mohite of Los Alamos National Laboratory in New Mexico. The butylammonium ion, which naturally repels water, forms spacer layers between the 2-D sheets and stops water from permeating into the crystal.

Getting the 2-D layers to line up just right has proved tricky, Mohite says. But by precisely controlling the way the layers form, he and colleagues created a solar cell that runs at 12.5 percent efficiency while standing up to light and humidity longer than a similar 3-D model, the team reported in 2016 in Nature. Although it was protected with a layer of glass, the 3-D perovskite solar cell lost performance rapidly, within a few days, while the 2-D perovskite withered only slightly. (After three months, the 2-D version was still working almost as well as it had been at the beginning.)

Despite the seemingly complex structure of the 2-D perovskites, they are no more complicated to make than their 3-D counterparts, says Mercouri Kanatzidis, a chemist at Northwestern and a collaborator on the 2-D perovskite project. With the right ingredients, he says, “they form on their own.”

His goal now is to boost the efficiency of 2-D perovskite cells, which don’t yet match up to their 3-D counterparts. And he’s testing different water-repelling ions to reach an ideal stability without sacrificing efficiency.

Other scientists have mixed 2-D and 3-D perovskites to create an ultra-long-lasting cell — at least by perovskite standards. A solar panel made of these cells ran at only 11 percent efficiency, but held up for 10,000 hours of illumination, or more than a year, according to research published in June in Nature Communications. And, importantly, that efficiency was maintained over an area of about 50 square centimeters, more on par with real-world conditions than the teeny-tiny cells made in most research labs.

A place for perovskites?

With boosts to their stability, perovskite solar cells are getting closer to commercial reality. And scientists are assessing where the light-capturing material might actually make its mark.

Some fans have pitted perovskites head-to-head with silicon, suggesting the newbie could one day replace the time-tested material. But a total takeover probably isn’t a realistic goal, says Sarah Kurtz, codirector of the National Center for Photovoltaics at the National Renewable Energy Lab.

“People have been saying for decades that silicon can’t get lower in cost to meet our needs,” Kurtz says. But, she points out, the price of solar energy from silicon-based panels has dropped far lower than people originally expected. There are a lot of silicon solar panels out there, and a lot of commercial manufacturing plants already set up to deal with silicon. That’s a barrier to a new technology, no matter how great it is. Other silicon alternatives face the same limitation. “Historically, silicon has always been dominant,” Kurtz says.

For Snaith, that’s not a problem. He cofounded Oxford Photo-voltaics Limited, one of the first companies trying to commercialize perovskite solar cells. His team is developing a solar cell with a perovskite layer over a standard silicon cell to make a super-efficient double-decker cell. That way, Snaith says, the team can capitalize on the massive amount of machinery already set up to build commercial silicon solar cells.

A perovskite layer on top of silicon would absorb higher-energy photons and turn them into electricity. Lower-energy photons that couldn’t excite the perovskite’s electrons would pass through to the silicon layer, where they could still generate current. By combining multiple materials in this way, it’s possible to catch more photons, making a more efficient cell.

That idea isn’t new, Snaith points out: For years, scientists have been layering various solar cell materials in this way. But these double-decker cells have traditionally been expensive and complicated to make, limiting their applications. Perovskites’ ease of fabrication could change the game. Snaith’s team is seeing some improvement already, bumping the efficiency of a silicon solar cell from 10 to 23.6 percent by adding a perovskite layer, for example. The team reported that result online in February in Nature Energy.

Rather than compete with silicon solar panels for space on sunny rooftops and in open fields, perovskites could also bring solar energy to totally new venues.

“I don’t think it’s smart for perovskites to compete with silicon,” Miyasaka says. Perovskites excel in other areas. “There’s a whole world of applications where silicon can’t be applied.”

Silicon solar cells don’t work as well on rainy or cloudy days, or indoors, where light is less direct, he says. Perovskites shine in these situations. And while traditional silicon solar cells are opaque, very thin films of perovskites could be printed onto glass to make sunlight-capturing windows. That could be a way to bring solar power to new places, turning glassy skyscrapers into serious power sources, for example. Perovskites could even be printed on flexible plastics to make solar-powered coatings that charge cell phones.

That printing process is getting closer to reality: Scientists at the University of Toronto recently reported a way to make all layers of a perovskite solar cell at temperatures below 150° — including the light-absorbing perovskite layer, but also the background workhorse layers that carry the electrons away and funnel them into current. That could streamline and simplify the production process, making mass newspaper-style printing of perovskite solar cells more doable.

Printing perovskite solar cells on glass is also an area of interest for Oxford Photovoltaics, Snaith says. The company’s ultimate target is to build a perovskite cell that will last 25 years, as long as a traditional silicon cell.